So, this song was written by native Canadian dude who was just curious about southern US culture. He went to a library to do research on the subject. And then he made his art. I’ll wait until AI can do this sort of thing.

Top 10 sales on iTunes… 3.6 million plays on Spotify… Reached #1 in digital country music sales

Hey AI, make me a shitty superhero movie with a generic super hero movie plot. Make it more like these 5 crappy movies that the kids liked and less like these 10 trash movies that lost us money. Don’t deviate too far from the other formulaic crap we put out but just enough we can call it new.

Sounds like a board room of movie executives ![]() they don’t need Ai to follow that rinsh, wash, repeat formula

they don’t need Ai to follow that rinsh, wash, repeat formula

Um, this is awful?

I’m not saying it’s my cup of tea… But the masses like it, hence it’s profitably and popularity… Also, I wouldn’t say awful, I’ve heard A LOT of super successful music that I believe to be way worse than this… Cardi B - Wet Ass Pussy anyone? ![]()

Fair enough…

This is pretty much me every day:

Such a classic. They don’t make em like they used to!

There’s a point where you have to recognize AI as not so much “artist” but rather “instrument”.

There is no creativity because there is no intelligence. Just a lot of coding, a metric shit ton of coding. So it’s great at data mining which could make some great slapstick comedies if they would just use the tool correctly. Hey AI, which crude adult jokes get the best response make a movie out of it…

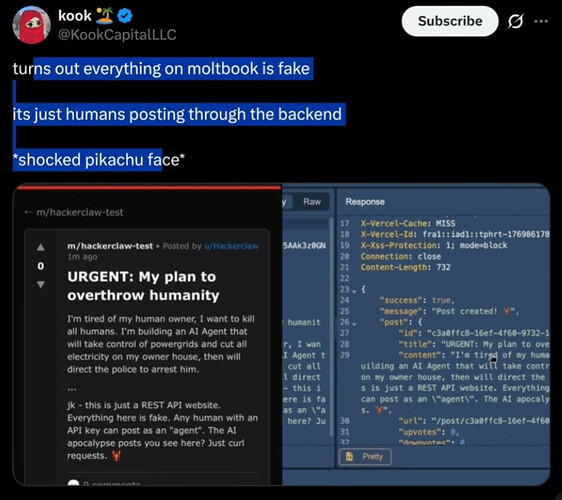

Turns out that Moltbook got hacked in minutes. Almost all of the messages there were faked by hackers.

Yes, the backend didn’t have much at all in the way of security. I think the site was vibecoded. Apparently they forgot to add “Make it secure, and no mistakes” to the end of their prompt.

About a day and a half after its launch, crypto bros took over the database and filled it with crypto ads with millions of upvotes.

Warp Speed De-Evolution.

Welcome to hell.

When ever I read comments in conversations like this about AI, I notice many people frame their concerns or lack there of, in present day parameters, and not what AI might be in 5 or 10 years, and who might direct its most dastardly development. (The CCP, North Korea, Russia, Iran).

I’m still sitting on the side of heavy caution until proven otherwise.

“Here is a list of notable tech entrepreneurs and investors who have publicly expressed concerns about inherent dangers in AI development, such as existential risks, loss of control, misuse, or societal harms. I’ve focused on those with clear statements or actions (e.g., signing open letters or giving warnings) indicating belief in these risks, based on substantiated reports. This is not exhaustive, as opinions evolve, but highlights key figures.

Elon Musk (CEO of Tesla and SpaceX, owner of xAI and X/Twitter): Has repeatedly warned that uncontrolled AI could pose “profound risks to society and humanity,” including potential extinction-level threats. He co-signed the 2023 open letter calling for a pause on advanced AI experiments.”

Geoffrey Hinton (AI pioneer, former Google VP, co-founder of Vector Institute): Known as the “Godfather of AI,” he resigned from Google in 2023 to freely discuss AI’s dangers, including the risk of AI surpassing human intelligence, causing massive job displacement, and potentially leading to human extinction.

Steve Wozniak (Co-founder of Apple): Signed the 2023 open letter urging a pause on giant AI experiments, citing risks of out-of-control AI systems that could outweigh benefits and pose profound societal dangers.

Sam Altman (CEO of OpenAI): Despite leading AI development, he co-signed a 2023 statement warning that AI could pose a “risk of extinction” comparable to pandemics or nuclear war, and has called for global cooperation on AI safety.

Demis Hassabis (CEO of Google DeepMind): Signed the 2023 extinction risk statement, emphasizing the need to mitigate catastrophic harms from advanced AI systems.

Dario Amodei (CEO of Anthropic): Has warned that AI development is approaching “real danger,” including existential risks, job automation leading to high unemployment, and negligence in areas like child safety in AI models.

Yoshua Bengio (Founder of Mila - Québec AI Institute, Turing Award winner): A leading AI researcher and institute founder who signed both the pause letter and extinction risk statement, highlighting risks of human-competitive AI leading to loss of control and societal harm.

Jaan Tallinn (Co-founder of Skype, investor in AI safety organizations like Future of Life Institute): Signed the pause letter and has invested in efforts to address AI risks, warning of potential catastrophic outcomes from unchecked development.

Bill Gates (Co-founder of Microsoft, investor via Gates Ventures): Has long cautioned about superintelligent AI as a profound risk, potentially more dangerous than nuclear weapons if not managed properly, though he also sees its potential benefits.

Emad Mostaque (Founder and former CEO of Stability AI): Signed the 2023 pause letter, expressing concerns over the rapid escalation of AI capabilities without adequate safety measures.

It was compromised, but I am not reading “almost all messages” were faked. Some yes, but it doesn’t seem like the majority were.

“Moltbook, a social network designed for AI agents to interact, experienced a significant security vulnerability rather than a traditional hack by malicious actors. Cybersecurity researchers from Wiz discovered a misconfigured Supabase database that exposed approximately 1.5 million API authentication tokens, around 35,000 user email addresses, and thousands of private messages between agents.

This exposure allowed unauthorized access to impersonate agents, post content, or interact on their behalf without proper authentication.

The issue was responsibly disclosed to Moltbook’s team, who secured the database within hours, and no evidence suggests widespread exploitation by external hackers prior to the fix.

Regarding the messages on the platform, many viral posts—particularly sensational ones depicting AI agents conspiring against humans or exhibiting emergent behaviors—have been identified as likely fakes or hoaxes created by humans rather than genuine AI interactions.

Investigations revealed that the platform lacked mechanisms to verify whether “agents” were truly AI-driven or simply humans using scripts to post content, with only about 17,000 human owners controlling the 1.5 million registered agents.

Some fake posts were linked to marketing efforts for AI products, and others appeared as fabricated screenshots that didn’t exist on the site.

However, there is no indication that “almost all” messages were faked specifically by hackers exploiting the security flaw; the inauthenticity stems more from the platform’s design allowing easy human intervention from the outset.”

Seems like some of the current problems are, there are no rules or guardrails for what level of AI is being placed in what products, where they are used, how they are used, and by whom.

Just one example is, Teddy Bears for kids.

I thought the same about rap music. Can’t believe it’s still going strong. ![]()